- From-cves-scanners-to-sbom-generation

- You are here!

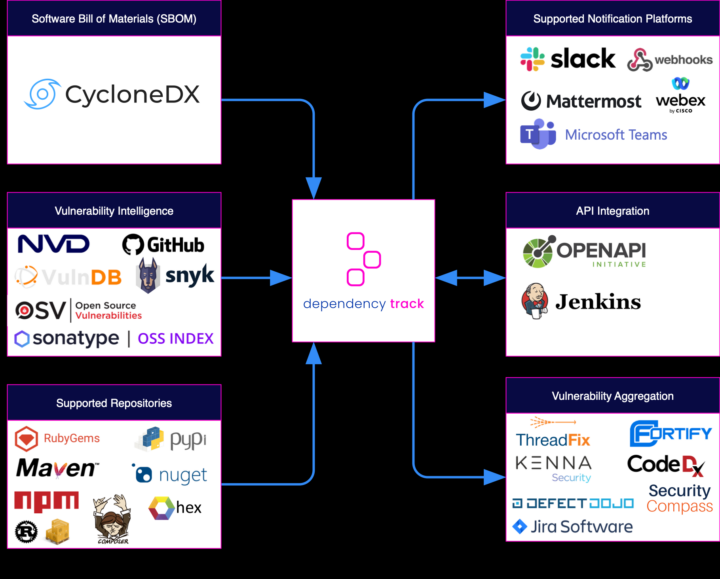

- Dependency Track – To come!

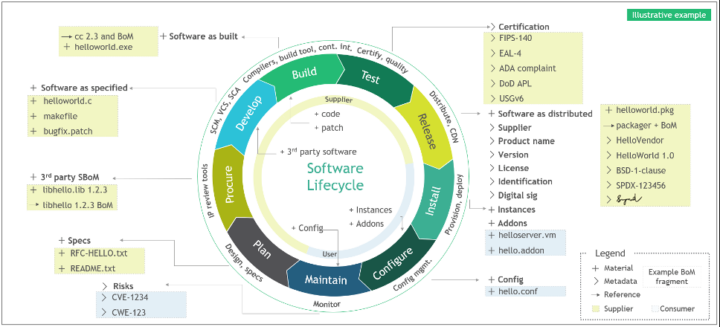

After the deep theoretical dive of the previous article let’s try to translate all that jazz in some real example and practical use cases for implementing a continuous SBOM file generation.

Verse 1)

Grype and Syft, two brothers, so true

In the world of tech, they’re both making their due

One’s all about security, keeping us safe

The other’s about privacy, a noble crusade(Chorus)

Together they stand, with a mission in hand

To make the digital world a better place, you understand

Grype and Syft, two brothers, so bright

Working side by side, to make the world’s tech just right(Verse 2)

Grype’s the strong one, he’s got all the might

He’ll protect your data, day and night

Syft’s got the brains, he’s always so smart

He’ll keep your secrets, close to your heart(Chorus)

ChatGPT

[Azure pipelines] Grype + Syft

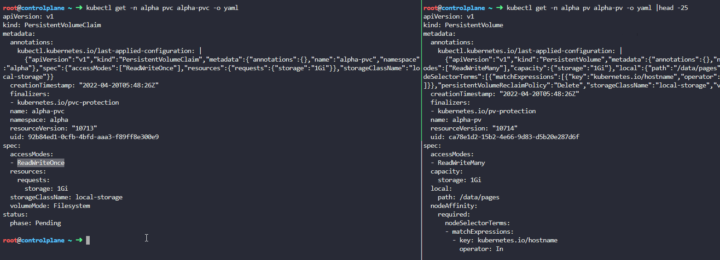

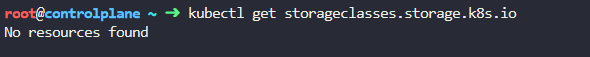

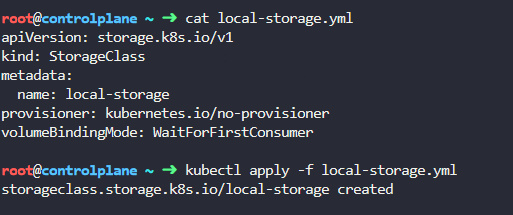

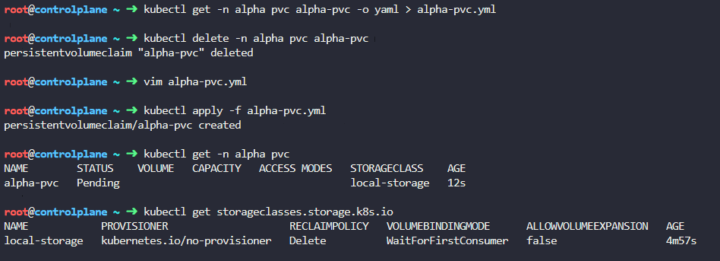

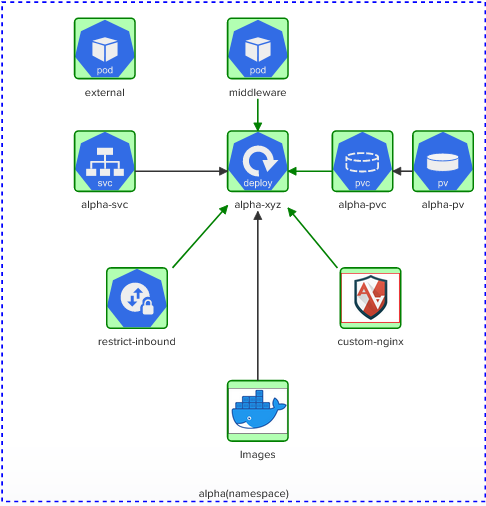

Following there is a working example of a sample Azure pipeline comprehending two templates for having a vulnerabilities scanner job and a parallel SBOM generation.

The first job will leverage Grype, a known open-source project by Anchore, while for the second one we will use its brother/sister Syft.

At the beginning what we do is to make sure this become a continuous scanning by selecting pushes on master as a trigger action, for example to have it start after each merge on a completed pull request.

You can specify the full name of the branch (for example, master) or a wildcard (for example, releases/*). See Wildcards for information on the wildcard syntax. For more complex triggers that use exclude or batch, check the full syntax on Microsoft documentation.

In the Grype template we will

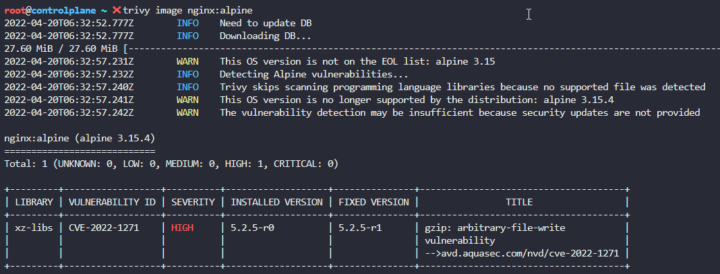

- download the latest binary from the public project

- set the needed permissions to read and execute the binary

- check if there is a grype.yaml with some extra configurations

- run the vulnerability scanner on the given image. The Grype databse will be updated before each scan

- save the results in a file “output_grype”

- use the output_grype to check if there are alerts that are at least High, if so we want also a Warning to be raised in our Azure DevOps web interface.

In the Syft template we will have a similar list of parameter, with the addition of the SBOM file format (json, text, cyclonedx-xml, cyclonedx-json, and much more).

After scanning our image for all its components we then publish the artifact in our pipeline, since probably we’ll want to pull this list from a SBOM analysis tool (i.e: OWASP Dependency-Track, see previous article).

Go to the code below. |🆗tested code |

Github Actions

In GitHub it would be even easier since Syft is offered as a service by an Anchore action.

By default, this action will execute a Syft scan in the workspace directory and upload a workflow artifact SBOM in SPDX format. It will also detect if being run during a GitHub release and upload the SBOM as a release asset.

A sample would be something like this:

name: Generate and Publish SBOM

on:

push:

branches:

- main

env:

DOCKER_IMAGE: <your-docker-image-name>

ANCHORE_API_KEY: ${{ secrets.ANCHORE_API_KEY }}

SBOM_ANALYSIS_TOOL_API_KEY: ${{ secrets.SBOM_ANALYSIS_TOOL_API_KEY }}

jobs:

generate_sbom:

runs-on: ubuntu-20.04

steps:

- name: Checkout code

uses: actions/checkout@v2

- name: Generate SBOM using Anchore SBOM Action

uses: anchore/actions/generate-sbom@v1

with:

image_reference: ${{ env.DOCKER_IMAGE }}

api_key: ${{ env.ANCHORE_API_KEY }}

- name: Publish SBOM

uses: actions/upload-artifact@v2

with:

name: sbom.json

path: anchore_sbom.json

Code Samples

cve-sbom-azure-pipeline.yml